![]()

![]()

Google It has been an intense week in terms of news and artificial intelligence. Yesterday he surprised everyone by introducing Bard, his own conversational AI, and today he announced the Live from Parisa virtual event that was held today and in which he presented various new features to improve the search experience of its users who, of course, are also driven by artificial intelligence.

Although we must point out that, the technological giant I had already anticipated some of these novelties at the Search On 2022 event carried out last September. Even so, it is still interesting to remember them and learn about the rest of the new features that accompany them and that will make “the exploration of information in search even more natural and intuitive“.

We’re applying our advancements in AI to make exploring information in Search even more natural and intuitive, from using Lens to “search your screen” through Assistant on Android to expanding multisearch globally. See what else is new. https://t.co/JYzfm787lk

—Google (@Google) February 8, 2023

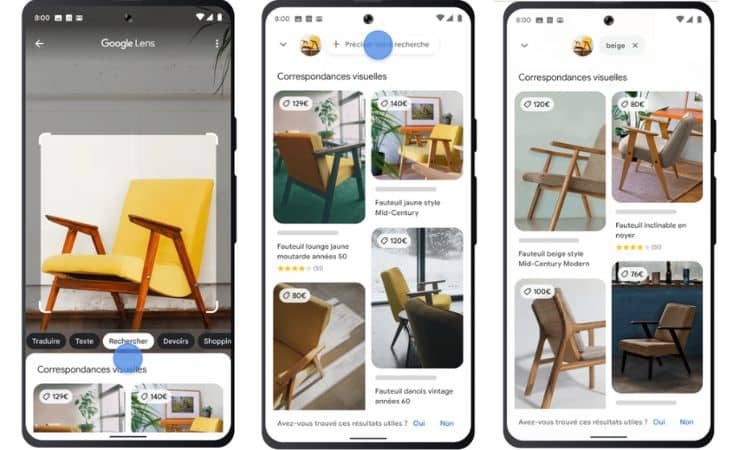

Multi-search: text and image at your service

This was, without a doubt, the star novelty presented by Google in Search On 2022. On this occasion, the technology giant has mentioned it again, recalling that it can already be used in all countries and languages where Google Lens is available.

The multi-search, combine Google Lens AI and computer vision with text search. In this way, Google adds the information and context that both methods provide to offer much more complete and accurate results. Its mechanics of use is very simple and intuitiveyou just have to access Google Lens and take or upload a photo and then slide up the results bar and click on “add to your search” to write what you want to complete your search.

Find what you need near you thanks to local search

In addition to this, multisearch also allows determine if we want to find local results. To do this, you just have to write “close to me” after removing or uploading the image from our search. This is a way to support local businesses and encourage consumption in them. At the moment this function is only available in English in the USbut Google has already advanced that in the coming months it will become so in the rest of the world.

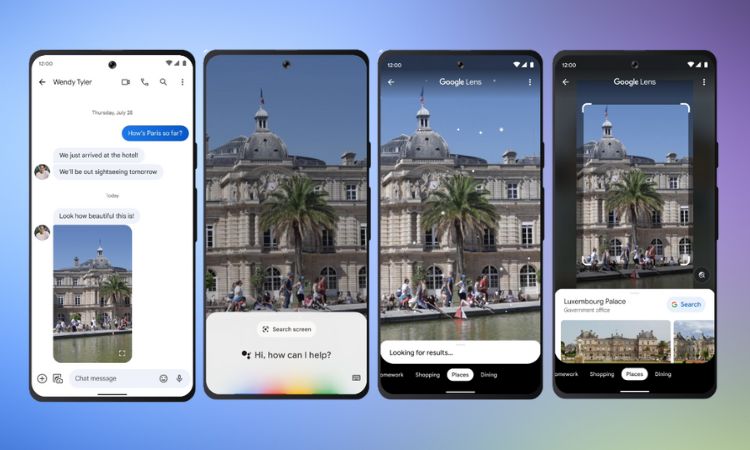

Google Lens: “If you can see it, you can search it”

With this phrase, Google has presented the latest evolution in Lens, its visual search technology, which up to now made it possible to identify and search for information about the elements that appeared in any photograph or, directly, on the user’s screen of a mobile phone when using its camera. Google Lens was born as an app in 2017 and since then it has been improving its capabilities based on developments related to AI. Currently, according to data from Google itself, Lens is used more than 10 billion times a month

Users will now be able to use Lens to “find what’s on your screen” in any Android environment. This technology will allow you search what you see in photos or videos on all kinds of websites and apps (such as messaging and video apps), no need to exit the app or to interrupt the experience. In other words, from now on, WhatsApp users will be able to access the Lens features to identify the elements that appear in a video sent through the Meta app, without having to leave it.

“Suppose some friends send you a message with a video of them hanging out in Paris. Suddenly, a monument appears in the background. Do you want to know what it is? Make a long press on your Android phone’s power or home button (the one that opens the Google Assistant). Next, tap on “search screen”. And then Lens identifies it as the Palais du Luxembourg! If you touch again, you will get more information, “explains Google in his statement.

Google Live View: street view + augmented reality + AI

liveview is “a radically new way to explore sites”, explains Google about this update in its way of viewing places in its popular Maps tool. A new evolution of its well-known Street View that “makes you feel like you’re already there… even if you’ve never been there. Thanks to advances in AI and machine vision, this feature merges billions of aerial and Street View images to create a digital model of the world, bursting with detail. Plus, it overlays useful information like the weather, traffic, and how crowded a place is.”

Google’s new Live View uses the capabilities of artificial intelligence and augmented reality to enhance users’ place search experience. This new feature, which is already active in London, Los Angeles, New York, Paris, San Francisco and Tokyo, will arrive “in the coming months” to Madrid, Barcelona and Dublin and will even allow augmented reality features to be added to the interiors of public spaces. Thus, as Google explains in an example, “it superimposes augmented reality arrows that show you the direction in which you should walk to quickly and safely find what you are looking for. In the coming months, this feature will help you navigate through more than a thousand new airports, train stations, and shopping malls.”

In addition, these novelties will apply to the user movements, regardless of the means of transport used, including the bicycle or public transport, showing useful information such as arrival time at destination and indications of which direction you should take. These in-sight directions will begin rolling out worldwide on Android and iOS devices in the coming months.

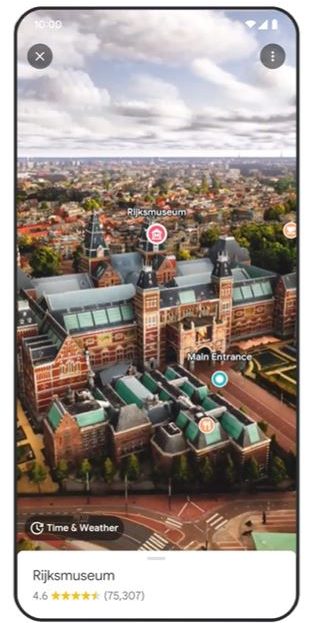

Google Immersive View, 3D representations of reality in Maps

Within the Maps environment, Google also announced the arrival of Immersive View, which will launch starting today in London, Los Angeles, New York, San Francisco and Tokyo. A novelty that uses the huge amount of Street View images and aerial photographs available to Google to generate three-dimensional recreations of reality.

Let’s say you’re planning a visit to the Rijksmuseum in Amsterdam. You can virtually fly over the building and see where the entrances are. With the time slider, you can see what the area looks like at different times of the day and what the weather will be like. You can also spot where it tends to be the most crowded so you can have all the information you need to decide where and when to go. If you’re hungry, slide down to street level to explore nearby restaurants, and even peek inside to quickly understand a place’s vibe before you book.

To create these realistic scenes, we used neural radiation fields (NeRF), an advanced artificial intelligence technique that transforms ordinary images into 3D renderings. With NeRF, we can accurately recreate the entire context of a place, including its lighting, the texture of materials, and what’s in the background. All this allows you to see if the moody lighting in a bar is the right ambience for a date night or if the views in a cafe make it the ideal place to have lunch with friends.”

Contextual translator: obtain greater precision in your translations

The tech giant has also made big announcements regarding its translator and the new capabilities it integrates that have been powered by artificial intelligence. The first one that we are going to talk about allows improve translation thanks to understanding the context that surrounds the sentence.

Imagine being able to get a translation that is accurate, that uses the right twists in language, local idioms, or more appropriate words to address the topic you want to talk about. Now it will be possible since the Google translator will offer you translations with more context, descriptions and examples in the chosen language. Goodbye to words and phrases with multiple meanings and not knowing which one is correct in each case.

This novelty will reach our devices in the coming weeks and will be available in English, Spanish, French, German and Japaneseamong other languages.

On the other hand, it is worth highlighting and celebrating the addition of 33 new languages to Google translate. Among them we can find Basque, Corsican, Hawaiian, Hmong, Kurdish, Latin, Luxembourgish, Sudanese, Yiddish or Zulu.

Image translator: translate respecting the original image

Finally, we come across another novelty that had already been announced in September of the previous year is the improved image translator built into Google Lens. This already allowed us to translate texts present in images just by focusing our camera on them, but the result was not integrated naturally into the image, but was highlighted by bars that hid part of the image.

However, this is over, since thanks to artificial intelligence, Google Lens is capable of translating the texts of the images and accurately recreating the full background of each word.

Photo: Google

Stay informed of the most relevant news on our Telegram channel

![Ecommtech 2025 guide: The best technology to climb your digital business [Ebook]](https://www.logitechgamingsoftware.co/wp-content/uploads/2025/06/ECOMMTECH-2025-articulo-1200x720-1-336x220.jpg)